From Replication Rates to Replication Evidence

A Guest Editorial

DOI:

https://doi.org/10.17879/replicationresearch-2026-9577Keywords:

replicability, knowledge accumulation, credibility, metascienceAbstract

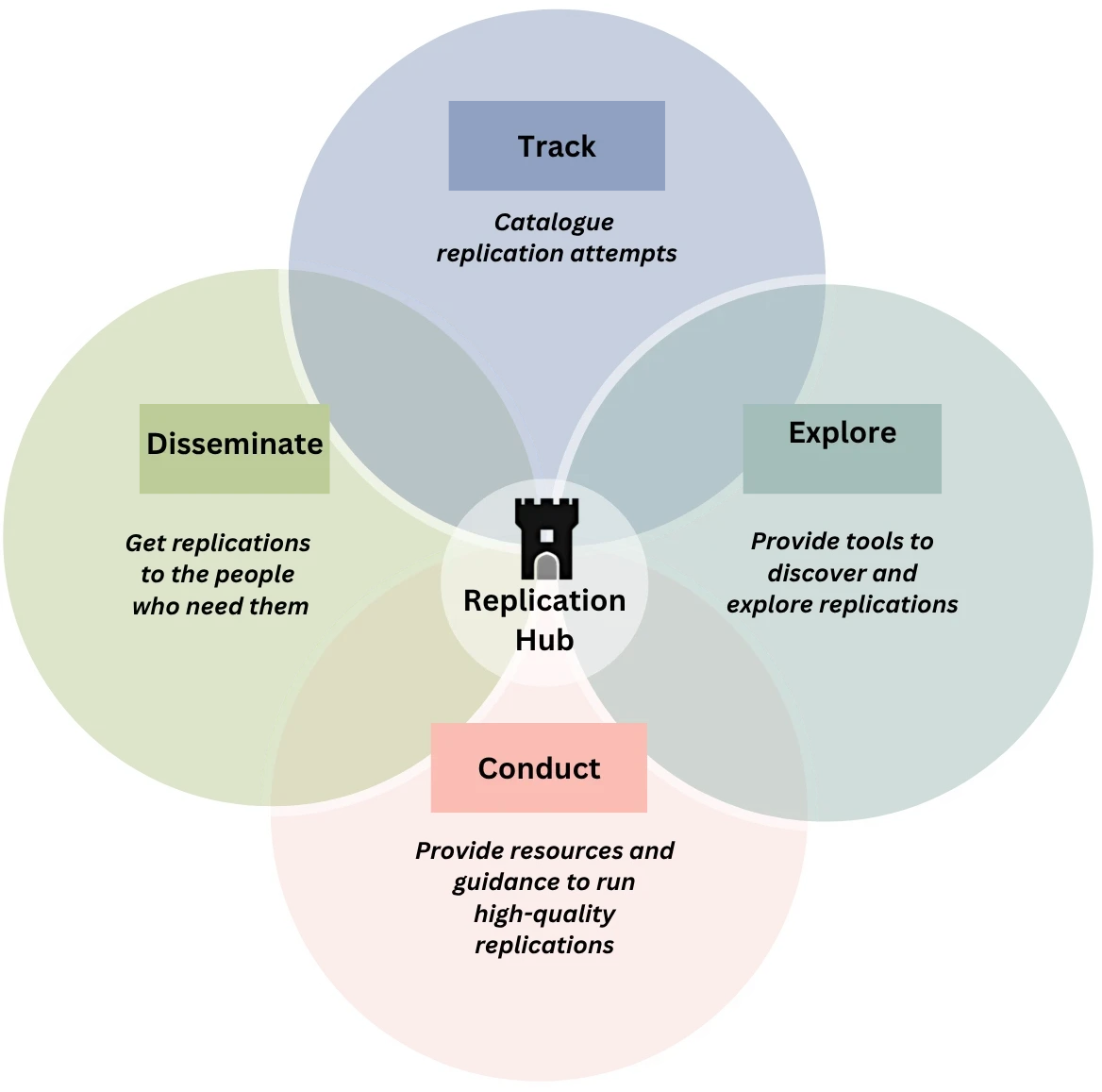

Repeating original studies using existing data (reproducibility) or new data (replicability) is key to improving the credibility of scientific results. Here, we focus on replications, which can be broadly categorized into direct replications—testing the original hypothesis in new data using the original analysis and design—and conceptual replications—testing the original hypothesis in new data using an alternate analysis and/or design. While encouraging replications is important, increasing their visibility and impact is equally crucial. Large-scale replication projects have generated valuable insights into the overall level of replicability across fields, but they typically emphasize aggregate estimates. We argue that this focus obscures the informational value of individual replication studies. Each replication provides independent evidence for updating beliefs about the likelihood that the underlying hypothesis is true and the magnitude of the effect. Publishing and disseminating individual replications as standalone contributions can therefore enhance their role in the cumulative advancement of scientific knowledge.

References

Aczel, B., Szaszi, B., Clelland, H. T., Kovacs, M., Holzmeister, F., Van Ravenzwaaij, D., ... Geraldes, D. (2026). Investigating the analytical robustness of the social and behavioural sciences. Nature, 652(8108), 135-142. https://doi.org/10.1038/s41586-025-09844-9

Anderson, C. A., Lepper, M. R., & Ross, L. (1980). Perseverance of social theories: The role of explanation in the persistence of discredited information. Journal of Personality and Social Psychology, 39(6), 1037-1049. https://doi.org/10.1037/h0077720

Baker, M. (2016). 1,500 scientists lift the lid on reproducibility. Nature, 533, 452-454. https://doi.org/10.1038/533452a

Benjamin, D. J., Berger, J. O., Johannesson, M., Nosek, B. A., Wagenmakers, E. J., Berk, R., … Johnson, V. E. (2018). Redefine statistical significance. Nature Human Behaviour, 2(1), 6-10. https://doi.org/10.1038/s41562-017-0189-z

Black, B., Desai, H., Litvak, K., Yoo, W., & Yu, J. J. (2024). The SEC’s short-sale experiment: Evidence on causal channels and reassessment of indirect effects. Management Science, 70(8), 4953-5625. https://doi.org/10.1287/mnsc.2023.4918

Brodeur, A., Cook, N., & Heyes, A. (2020). Methods matter: p-hacking and publication bias in causal analysis in economics. American Economic Review, 110(11), 3634-3660. https://doi.org/10.1257/aer.20190687

Brodeur, A., Lé, M., Sangnier, M., & Zylberberg, Y. (2016). Star Wars: The empirics strike back. American Economic Journal: Applied, 8(1), 1-32. https://doi.org/10.1257/app.20150044

Brodeur, A., Mikola, D., & Cook, N. (2024). Mass reproducibility and replicability: A new hope (IZA Discussion Paper No. 16912). IZA Institute of Labor Economics. https://ssrn.com/abstract=4790780

Button, K. S., Ioannidis, J. P., Mokrysz, C., Nosek, B. A., Flint, J., Robinson, E. S., & Munafò, M. R. (2013). Power failure: Why small sample size undermines the reliability of neuroscience. Nature Reviews Neuroscience, 14(5), 365-376. https://doi.org/10.1038/nrn3475

Camerer, C. F., Dreber, A., Forsell, E., Ho, T.-H., Huber, J., Johannesson, M., Kirchler, M., Almenberg, J., Altmejd, A., Chan, T., Heikensten, E., Holzmeister, F., Imai, T., Isaksson, S., Nave, G., Pfeiffer, T., Razen, M., & Wu, H. (2016). Evaluating replicability of laboratory experiments in economics. Science, 351(6280), 1433-1436. https://doi.org/10.1126/science.aaf0918

Camerer, C. F., Dreber, A., Holzmeister, F., Ho, T.-H., Huber, J., Johannesson, M., Kirchler, M., Nave, G., Nosek, B. A., Pfeiffer, T., Altmejd, A., Buttrick, N., Chan, T., Chen, Y., Forsell, E., Gampa, A., Heikensten, E., Hummer, L., Imai, T., … Wu, H.(2018). Evaluating the replicability of social science experiments in Nature and Science between 2010 and 2015. Nature Human Behaviour, 2(9), 637-644. https://doi.org/10.1038/s41562-018-0399-z

Campbell, D., Brodeur, A., Dreber, A., Johannesson, M., Kopecky, J., Lusher, L., & Tsoy, N. (2024). The robustness reproducibility of the American Economic Review. I4R Discussion Paper Series (No. 124). https://www.econstor.eu/bitstream/10419/295222/1/I4R-DP124.pdf

Chambers, C. D., & Tzavella, L. (2022). The past, present and future of Registered Reports. Nature Human Behaviour, 6(1), 29-42. https://doi.org/10.1038/s41562-021-01193-7

Chang, A. C., & Li, P. (2017). A preanalysis plan to replicate sixty economics research papers that worked half of the time. American Economic Review, 107(5), 60-64. https://doi.org/10.1257/aer.p20171034

Clark, C. J., Connor, P., & Isch, C. (2023). Failing to replicate predicts citation declines in psychology. Proceedings of the National Academy of Sciences, 120(29), e2304862120. https://doi.org/10.1073/pnas.2304862120

Cova, F., Strickland, B., Abatista, A., Allard, A., Andow, J., Attie, M., Beebe, J., Berniūnas, R., Boudesseul, J., Colombo, M., Cushman, F., Diaz, R., N’Djaye, N., van Dongen, N., Dranseika, V., Earp, B. D., Gaitán Torres, A., Hannikainen, I., Hernández-Conde, J. V., … Zhou, X. (2021). Estimating the reproducibility of experimental philosophy. Review of Philosophy and Psychology, 12(1), 9-44. https://doi.org/10.1007/s13164-018-0400-9

Davis, A. M., Flicker, B., Hyndman, K., Katok, E., Keppler, S., Leider. S., et al. (2023). A replication study of operations management experiments in Management Science. Management Science, 69, 4977-4991. https://doi.org/10.1287/mnsc.2023.4866

Delios, A., Clemente, E. G., Wu, T., Tan, H., Wang, Y., Gordon, M., ... & Uhlmann, E. L. (2022). Examining the generalizability of research findings from archival data. Proceedings of the National Academy of Sciences, 119(30), e2120377119. https://doi.org/10.1073/pnas.2120377119

Dewald, W. G., Thursby, J. G., & Anderson, R. G. (1986). Replication in empirical economics: The Journal of Money, Credit and Banking project. The American Economic Review, 76(4), 587–603.

Dreber, A., & Johannesson, M. (2025a). A framework for evaluating reproducibility and replicability in economics. Economic Inquiry, 63(2), 338-356. https://doi.org/10.1111/ecin.13244

Dreber, A., & Johannesson, M. (2025b). The credibility gap: Evaluating and improving empirical research in the social sciences. Routledge.

Dreber, A., Pfeiffer, T., Almenberg, J., Isaksson, S., Wilson, B., Chen, Y., Nosek, B. A., & Johannesson, M. (2015). Using prediction markets to estimate the reproducibility of scientific research. Proceedings of the National Academy of Sciences, 112(50), 15343-15347. https://doi.org/10.1073/pnas.1516179112

Errington, T., Mathur, M., Soderberg, C.K., Denis, A., Iorns, E., & Nosek, B.A. (2021). Investigating the replicability of preclinical cancer biology. eLife, 10, e71601. https://elifesciences.org/articles/71601

Gelman, A., & Loken, E. (2014). The statistical crisis in science. American Scientist, 102(6), 460-465. https://doi.org/10.1511/2014.111.460

Gertler, P., Galiani, S., & Romero, M. (2018). How to make replication the norm. Nature, 544(7693), 417-419. https://doi.org/10.1038/d41586-018-02108-9

Heyard, R., Pawel, S., Frese, J., Voelkl, B., Würbel, H., McCann, S., Held, L., Wever, K. E., Hartmann, H., Townsin, L., & Zellers, S. (2025). A scoping review on metrics to quantify reproducibility: A multitude of questions leads to a multitude of metrics. Royal Society Open Science, 12(7), Article 242076.https://doi.org/10.1098/rsos.242076

Holzmeister, F., Johannesson, M., Böhm, R., Dreber, A., Huber, J., & Kirchler M. (2024). Heterogeneity in effect size estimates. Proceedings of the National Academy of Sciences, 121(32), e2403490121. https://doi.org/10.1073/pnas.2403490121

Holzmeister, F., Johannesson, M., Camerer, C. F., Chen, Y., Ho, T.-H., Hoogeveen, S., Huber, J., Imai, N., Imai, T., Jin, L., Kirchler, M., Ly, A., Mandl, B., Manfredi, D., Nave, G., Nosek, B. A., Pfeiffer, T., Sarafoglou, A., Schwaiger, R., … Dreber, A. (2025). Examining the replicability of online experiments selected by a decision market. Nature Human Behaviour, 9(2), 316-330. https://doi.org/10.1038/s41562-024-02062-9

Huber, C., Dreber, A., Huber, J., Johannesson, M., Kirchler, M., Weitzel, U., Abellán, M., Adayeva, X., Ay, F. C., Barron, K., Berry, Z., Bönte, W., Brütt, K., Bulutay, M., Campos-Mercade, P., Cardella, E., Claassen, M. A., Cornelissen, G., Dawson, I. G. J., … Holzmeister, F. (2023). Competition and moral behavior: A meta-analysis of forty-five crowd-sourced experimental designs. Proceedings of the National Academy of Sciences, 120(23), e2215572120. https://doi.org/10.1073/pnas.2215572120

Ioannidis, J. P. A. (2005). Why most published research findings are false. PLoS Medicine, 2(8), e124. https://doi.org/10.1371/journal.pmed.1004085

Johnson, V. E., Payne, R.D., Wang, T., Asher, A., & Mandal, S. (2017). On the reproducibility of psychological science. Journal of the American Statistical Association, 112(517), 1-10. https://doi.org/10.1080/01621459.2016.1240079

Klein, R. A., Ratliff, K. A., Vianello, M., Adams, R. B., Jr., Bahník, Š., Bernstein, M. J., Bocian, K., Brandt, M. J., Brooks, B., Brumbaugh, C. C., Cemalcilar, Z., Chandler, J., Cheong, W., Davis, W. E., Devos, T., Eisner, M., Frankowska, N., Furrow, D., Galliani, E. M., … Nosek, B. A. (2014). Investigating variation in replicability: A “many labs” replication project. Social Psychology, 45(3), 142–152. https://doi.org/10.1027/1864-9335/a000178

Klein, R. A., Vianello, M., Hasselman, F., Adams, B. G., Adams, R. B., Jr., Alper, S., Aveyard, M., Axt, J. R., Babalola, M. T., Bahník, Š., Batra, R., Berkics, M., Bernstein, M. J., Berry, D. R., Bialobrzeska, O., Binan, E. D., Bocian, K., Brandt, M. J., Busching, R., … Nosek, B. A.(2018). Many Labs 2: Investigating variation in replicability across samples and settings. Advances in Methods and Practices in Psychological Science, 1(4), 443-490. https://doi.org/10.1177/2515245918810225

Nosek, B. A., Ebersole, C.R., DeHaven, A.C., & Mellor, D.T. (2018). The preregistration revolution. Proceedings of the National Academy of Sciences, 115(11), 2600-2606. https://doi.org/10.1073/pnas.1708274114

Nosek, B. A., & Lakens, D. (2014). A method to increase the credibility of published results. Social Psychology, 45(3), 137–141. https://doi.org/10.1027/1864-9335/a000192

Open Science Collaboration. (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716. https://doi.org/10.1126/science.aac4716

Patel, C. J., Burford, B., & Ioannidis, J. P. A. (2015). Assessment of vibration of effects due to model specification can demonstrate the instability of observational associations. Journal of Clinical Epidemiology, 68(9), 1046–1058. https://doi.org/10.1016/j.jclinepi.2015.05.029

Patil, P., Peng, R. D., & Leek, J. T. (2016). What should we expect when we replicate? A statistical view of replicability in psychological science. Perspectives of Psychological Science, 11(4), 539-544. https://doi.org/10.1177/1745691616646366

Pawel, S., Heyard, R., Micheloud, C., & Held, L. (2024). Replication of null results: Absence of evidence or evidence of absence? eLlife, 12, RP92311. https://doi.org/10.7554/eLife.92311.3

Pérignon, C., Akmansoy, O., Hurlin, C., Dreber, A., Holzmeister, F., Huber, J., Johannesson, M., Kirchler, M., Menkveld, A. J., Razen, M., & Weitzel, U. (2024). Computational reproducibility in finance: Evidence from 1,000 tests. The Review of Financial Studies, 37(11), 3558-3593. https://doi.org/10.1093/rfs/hhae029

Ross, L., Lepper, M.R., & Hubbard, M. (1975). Perseverance in self-perception and social perception: Biased attributional processes in the debriefing paradigm. Journal of Personality and Social Psychology, 32(5), 880-892. https://doi.org/10.1037/0022-3514.32.5.880

Schafmeister, F. (2021). The effect of replications on citation patterns: Evidence from a large-scale reproducibility project. Psychological Science, 32(10), 1537-1548. https://doi.org/10.1177/09567976211005767

Serra-Garcia, M., & Gneezy, U. (2021). Nonreplicable publications are cited more than replicable ones. Science Advances, 7(21), eabd1705. https://doi.org/10.1126/sciadv.abd1705

Simmons, J. P., Nelson, L.D., & Simonsohn, U. (2011). False-positive psychology: Undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychological Science, 22(11), 1359-1366. https://doi.org/10.1177/0956797611417632

Simonsohn, U. (2015). Small telescopes: Detectability and the evaluation of replication results. Psychological Science, 26(5), 559-569. https://doi.org/10.1177/0956797614567341

Simonsohn, U., Simmons, J. P., & Nelson, L. D. (2020). Specification curve analysis. Nature Human Behaviour, 4(11), 1208–1214. https://doi.org/10.1038/s41562-020-0912-z

Stark, P. B. (2018). Before reproducibility must come preproducibility. Nature, 557(7706), 613–614. https://doi.org/10.1038/d41586-018-05256-0

Steegen, S., Tuerlinckx, F., Gelman, A., & Vanpaemel, W. (2016). Increasing transparency through a multiverse analysis. Perspectives on Psychological Science, 11(5), 702-712. https://doi.org/10.1177/1745691616658637

Tondlekar, R., Wallrich, L., Weinerova, J., Fouilloux, A., Baldoni, C., Meier, M., Flores Kanter, P. E., Paiva Trajano, I., Vaidis, D. C., Müller, M., Arriaga Ferreira, P., Coulson, H., Röseler, L., & FORRT. (2026). Zotero Replication Checker (Version 0.1.12) [Computer software]. Zenodo. https://doi.org/10.5281/zenodo.18671300

Tyner, A. H., Abatayo, A.L., Daley, M., Field, S., Fox, N., Haber, N.A., et al. (2026). Investigating the replicability of the social and behavioural sciences. Nature, 652(8108), 143-150. https://www.nature.com/articles/s41586-025-10078-y

Vilhuber, L., & Cavanagh, J. (2025). Report of the AEA Data Editor. AEA Papers and Proceedings, 113, 850–863. https://doi.org/10.1257/pandp.115.944

Wallrich, L., Röseler, L., Hartmann, H., Ashcroft-Jones, S., Doetsch, C., Kaiser, L., Schüller, S. M., Aldoh, A., Behbood, H., Elsherif, M. M., Klett, N., Krapp, J., Liu, M., Pavlović, Z., Pennington, C. R., Schütz, A., Seida, C., Siziva, K., Skvortsova, A., … Azevedo, F. (2026). FORRT Library of Replication Attempts (FLoRA) [Data set]. OSF. https://doi.org/10.17605/OSF.IO/9R62X

Downloads

Published

How to Cite

License

Copyright (c) 2026 Felix Holzmeister, Colin F. Camerer, Florian Cova, Anna Dreber, Magnus Johannesson

This work is licensed under a Creative Commons Attribution 4.0 International License.